The Meltdown That Brought Our Startup to Its Knees for 15 Hours

As a founder, I always thought I’d be ready when the shit hit the fan. Last Friday, I learned how wrong I was…

Knock, knock.

When the first knock came at 7:30AM, I was still dreaming.

Knock, knock, knock.

Drifting between deep sleep and a groggy haze, I lifted my head, wondering where that obnoxious noise was coming from.

KNOCK KNOCK KNOCK KNOCK KNOCK

At this point, there was no mistaking it: the banging was coming from my own front door.

Why would anyone come knocking this early?

My thoughts cloudy, my eyelids heavy, and still wearing only my boxers, I clumsily stumbled down the stairs and pulled open the door to find my confused neighbor (and good friend), Jon, holding out his cell phone.

“It’s Adam.”

Still half-asleep, I didn’t even think to question this bizarre scene. I took his phone.

“Adam?”

“Dude, what the hell? I’ve been trying to call you. The app has been down for eleven hours.”

In the two seconds it took my foggy brain to process Adam’s words, time stood still.

And that’s when my jaw dropped, and a wrecking ball planted itself firmly in the pit of my stomach.

No Time To Be Surprised

I was wide awake instantly. I handed Jon his phone back, sprinted back up the staircase and opened my laptop, doing my best to mentally prepare myself for the shit to hit the fan.

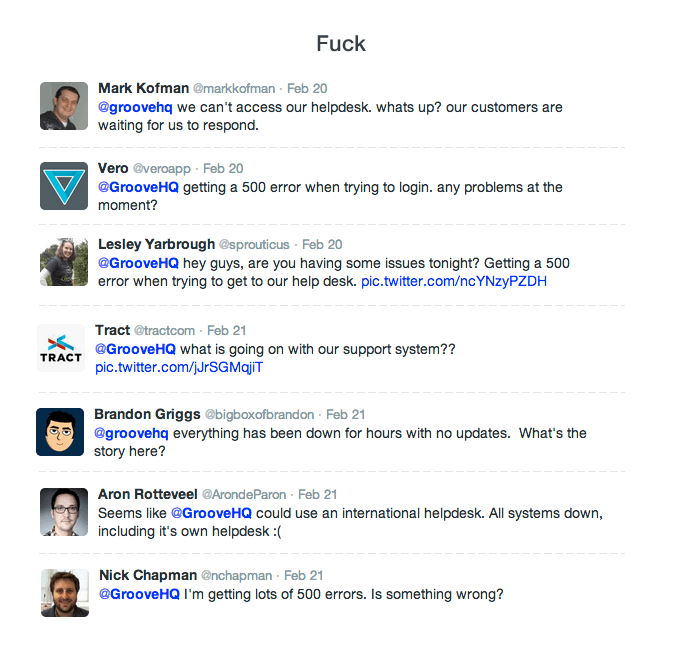

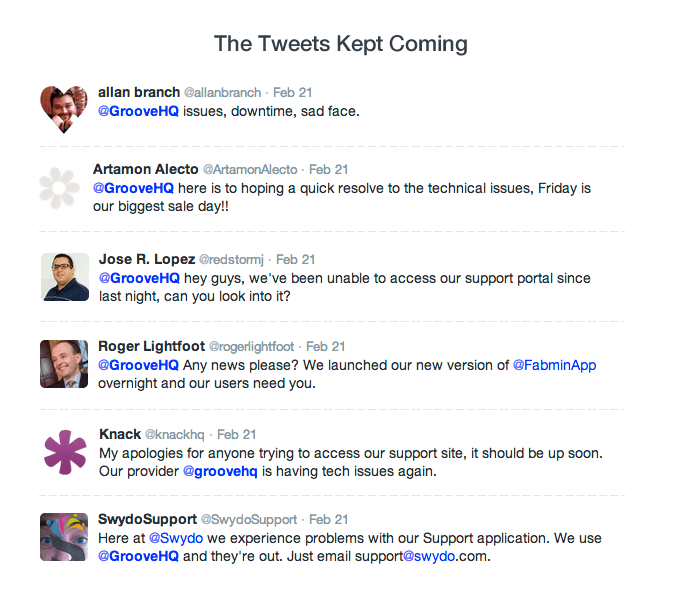

But as I saw as soon as I opened Twitter, it already had.

The outage had been going on for the entire night, and our customers on the other side of the globe had been dealing with it for an entire business day without so much as a peep from us.

They were confused and concerned, and some were downright furious. As they should have been.

I grabbed my iPhone, which had died the night before, plugged it in and powered it on. My first calls were to Jordan and Chris, Groove’s lead developers, asking them to come online immediately.

We convened on HipChat and the team got to work trying to find the cause of the outage.

And when I say that the team got to work, I mean that Jordan and Chris got to work as I, a non-developer with very little technical expertise, helplessly stood by.

The first ten minutes, for me, were the most agonizing in Groove’s history.

While the developers worked, my mind raced.

There are few things worse than working your ass off for years to build a business, hustling every chance that you get, and running head on into a disaster that’s out of your control and threatens to undo your dreams.

Resisting every urge to punch my computer screen, I began to help Adam respond to the barrage of incoming emails.

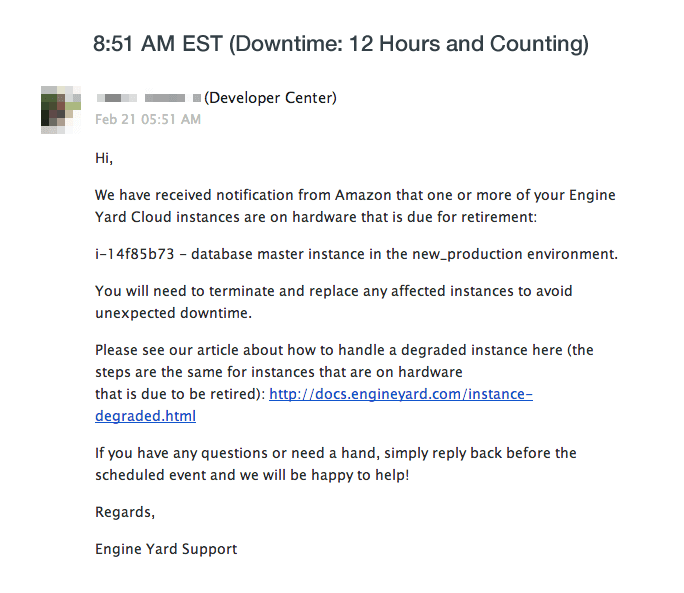

We wrote an email for our customers to make them aware of the outage, apologize and let them know that we were on it. As I gave it a final read-through, a pop-up notified me of an incoming message. It was from Engine Yard, our cloud server management company.

Unsure if the email had anything to do with our current issues, I shared it with the team on HipChat.

Almost immediately, Jordan shot back: “That’s it.”

Ten minutes later, on the phone with Engine Yard, Jordan learns that the server in question, scheduled to be retired in five days, had been mistakenly terminated the night before.

I was angry, but it wasn’t totally Engine Yard’s fault. Not even close.

If we had known about the outage when it happened, it wouldn’t have taken us very long to find the cause. And it certainly wouldn’t have been twelve hours before our worried customers heard from us.

You see, we had server monitoring in place. Except that it was set to send us email alerts in case of outages.

And since we don’t check email in the middle of the night, the entire team slept as the disaster unfolded.

It was an idiotic, absent-minded, careless, colossal fuck-up.

Takeaway: If you’re a SaaS company without a round-the-clock team, do this right now: setup your server monitoring service to call the personal phones of at least three team members in case of an outage. If we had done that, this story would be a lot less painful to write.

A Surprising Response From Our Customers

I wanted to cry.

I wanted to throw up.

I wanted to board a plane, visit the office of every single customer who had been impacted, drop to my knees and beg for forgiveness.

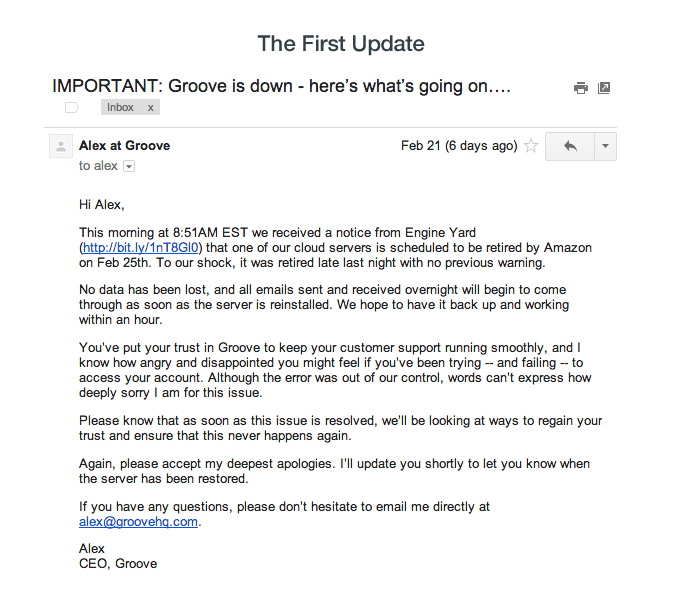

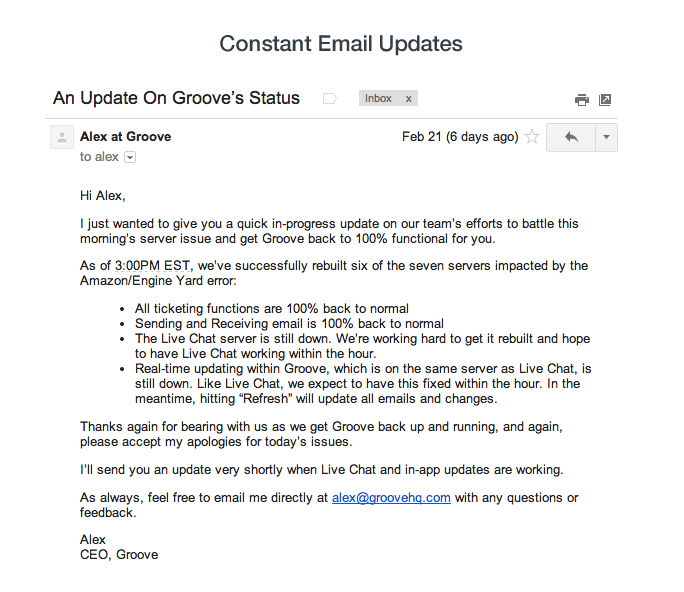

Instead, all I could do was send an email to update our customers:

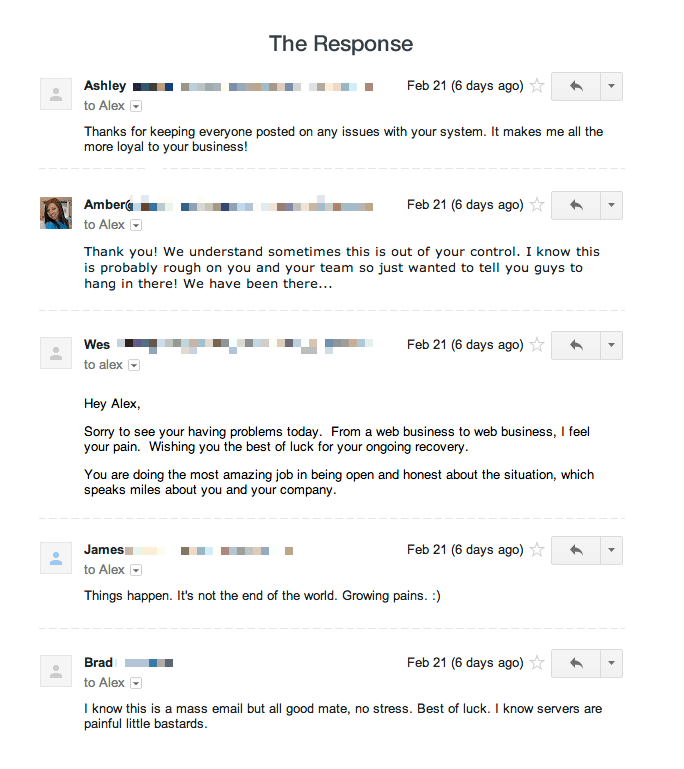

To my surprise, the majority of email responses that I received were supportive and understanding.

Still, as I continued to check in with our developers as the minutes passed, I couldn’t keep the worst out of my head:

- How many customers are we losing?

- How much trust are we losing?

- How are we going to recover from this?

For a while, things had been amazing.

January was Groove’s best month ever, revenue-wise, and February was shaping up to be even better.

The Small Business Stack had taken off, with more than 1,500 businesses signing up in the first two weeks.

We had just announced a huge new integration — with HipChat — the day before, with the HipChat team helping us promote the news and sending droves of new users to Groove.

How quickly it all seemed to start falling apart. I had just blogged a couple of weeks before about my biggest fear as a founder: it’s letting down our customers.

Now, that fear was becoming reality.

Takeaway: Always remember that there are peaks and valleys. For startups, even when you think you’ve broken through the worst of it, shit can go downhill fast. No advice I could give would make this rollercoaster emotionally easier, but knowing that a steep drop is probably coming up can, at the very least, help you be more prepared to ride it.

Damage Control

Because the terminated server was our master database, our team needed to rebuild the entire cluster, which would take hours.

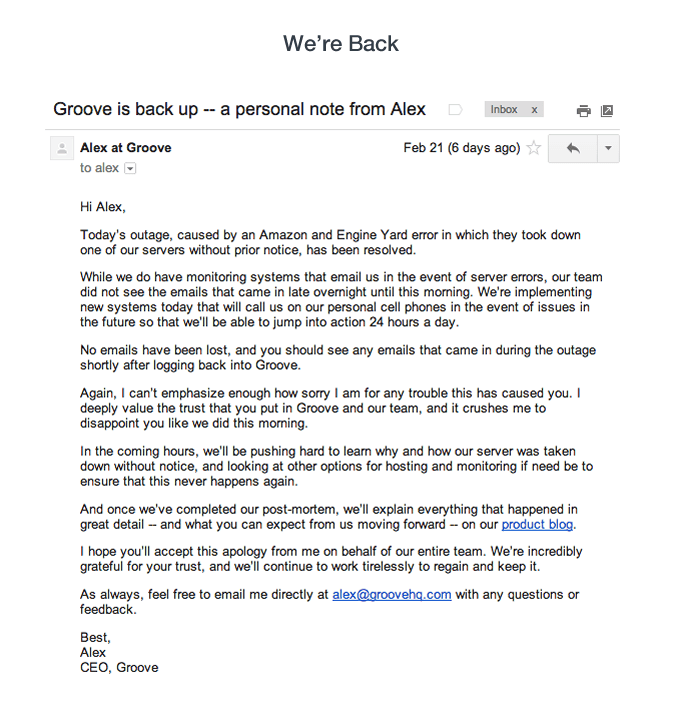

Throughout the process, we did our best to keep customers informed. The emails we pushed out throughout the day were among the most painful I’ve ever had to send, as I knew just how frustrated and angry I would be if I were in the readers’ shoes.

We also Tweeted like crazy:

We overcommunicated, because if a service that I relied on heavily was inexplicably down, I’d want constant updates, too.

After five torturous hours, we finally got the app back up, and two hours later we restored it to full functionality.

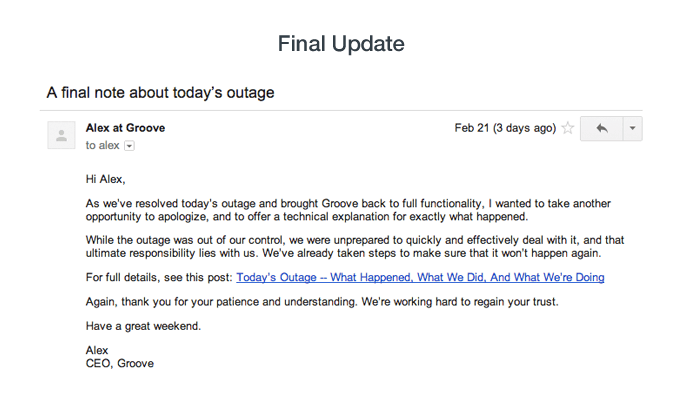

We also published a post on our product blog about the technical details of the outage.

Based on the conversations I’ve had with customers since the outage, our responsiveness and emails throughout the day prevented more than a few of them from jumping ship.

Takeaway: When disaster strikes, don’t leave your customers in the dark. Outages can happen to anyone (and they regularly do), but respecting your customers’ trust in you and keeping them in the know is critical. Get on Twitter. Get on email. Get wherever your customers are. Be communicative, be honest, be understanding, and be apologetic.

Planning for Next Time

Our entire team was 100% focused on the outage and customer communication related to it for the whole day.

That’s dozens of cumulative hours of productivity — and thousands of dollars in overhead — that would have otherwise gone to growing the business, instead of saving it.

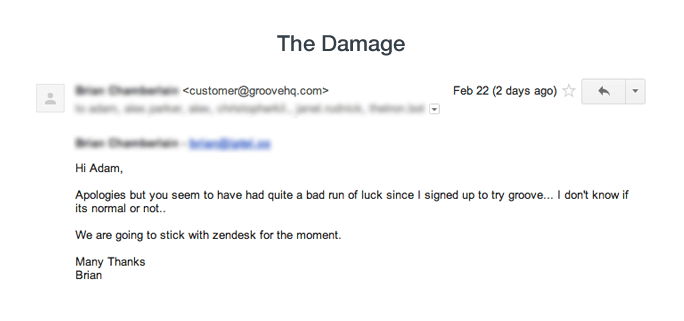

There are costs that we don’t fully know about yet, either.

While no customers cancelled their accounts on Friday, I know that some current trial prospects won’t convert over this.

We’re not sure what impact it will have on monthly churn, or new customer acquisition. We’re not sure if or how much we’ve damaged the Groove brand, and that kills me. But when we do have enough data to calculate that damage, I’ll update this post.

Ultimately, we were caught completely and utterly unprepared. While the Engine Yard error should never have happened, neither should our delayed response and subsequent scramble.

Since our launch, we’ve been working hard on enhancing Groove: features, UI improvements and tackling bugs have all been huge priorities.

Unfortunately, we hadn’t spent as much time as we should have on fail-proofing our infrastructure. While we had our heads down in the sand plowing through product development, our servers simply worked. And it took an awful wake-up call like this to realize that we needed to do better.

No longer will infrastructure be a “feature” to be weighed and prioritized against others in our backlog. It’s the foundation of everything we have, everything we do, and it will be treated as such.

Beyond simply upgrading our server monitoring to PagerDuty, we’ve now spent many more hours of developer time putting together a detailed plan to make our server infrastructure stronger and more stable.

We’re also working on a new push to share more — and more transparently — about the development/IT side of Groove, and not just our business growth. Stay tuned for more on that next week, including a public link to our detailed infrastructure improvement plan.

We’ll also be signing up for StatusPage.io (incidentally, a Groove customer) to help us in case of future outages.

And while I think we did pretty well communicating with our customers throughout the outage, we’ve also written a crisis communication plan that will help us spend less time flailing about next time (as much as I loathe to say it, for most businesses there will be a next time) before establishing contact with our customers.

You can find a copy of that plan here.

Just like all of the other regrets I have, if we did these exercises a week ago, I may have been writing an entirely different post today.

Takeaway: Whether you have five customers or five thousand, spend time thinking about how you’ll react when — not if — major issues happen. Think about infrastructure. Think beyond delivering value to your customers, and think about what you’ll do when that value (temporarily) disappears.

Time to Move Forward

A lot of things went wrong last Friday that made me feel more panicked, upset and guilty than anything has in a very long time.

But a few things went right.

When we finally learned about the outage, our team jumped into action, and I’m proud of how we performed under pressure.

And when we began to talk with our customers about what was going on, I was surprised at the understanding and appreciative responses we were getting, and at how few people actually left that day.

To me, that’s a glimmer of hope that we’ve built something special.

Something that’s valuable enough that our customers are willing to forgive a massive screw-up (at least this once) and stick with us.

And while I’ll always remember the grey hairs I earned and the lessons I learned last Friday, that hope is why I’m not going to dwell on the issue any longer.

That hope is why we march on.