6 A/B Tests That Did Absolutely Nothing for Us

We’ve written about how A/B tests have helped us grow Groove. But that’s only the tip of the iceberg…

Imagine having a button that you could push to instantly double your conversion rate, without having to do any hard work, research or trial-and-error?

Sounds pretty amazing, doesn’t it?

It’d sure make the struggles of being a startup founder — the long days, the neverending roadblocks, the constant fear — a whole lot easier to battle through.

Seeing blog posts nearly every day about how a company doubled, tripled, or quadrupled their results with the simple flick of a switch — even on this very blog — can make it easy to believe that such a button exists.

But one of the hardest lessons that I’ve had to learn as an entrepreneur is that, try as I might, I can’t find that button.

And I likely never will.

What we don’t see when we look at those “big win” case studies is the hundreds (sometimes thousands) of tests that had to completely flop before success happened “with a simple test.”

I know we’ve been guilty of only showing the tip of the iceberg ourselves, if only because the background work doesn’t make for very interesting reading.

But I think it’s important for anyone looking for a “magic bullet” to understand one simple truth: testing is mostly failure after failure. If you’re lucky, you find a statistically viable win after a few weeks. Most of the time, we see results after months of iteration.

To illustrate the point, I thought I’d share some tests that are frequently pointed to as “easy wins” that did absolutely nothing for Groove.

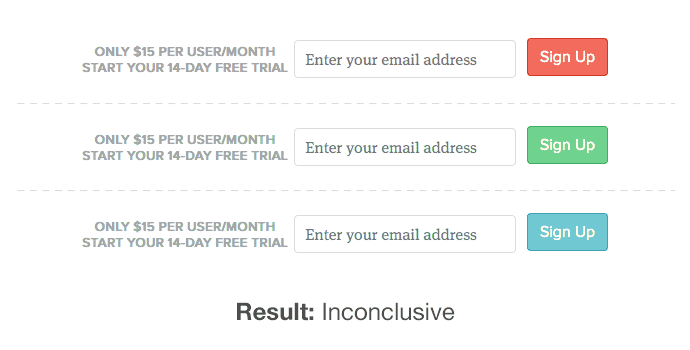

1) Signup Button Color

There’s a lot of psychology research that points to the impact various colors make on our behavior, and a lot of companies have gotten results from testing button color changes. We didn’t get the same result.

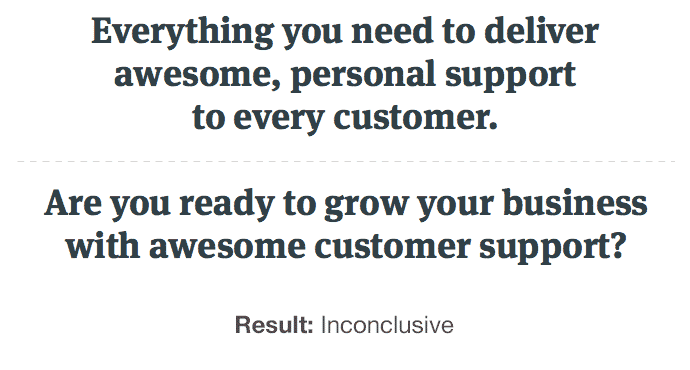

2) Homepage Headline

Headline/messaging tests have produced big wins for us, and we’ll share those results later, but we’ve had dozens of tests end up like the one above.

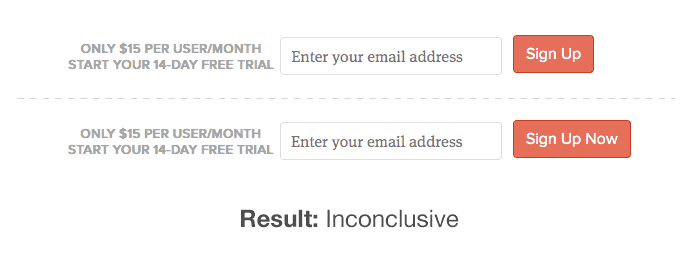

3) Signup Call to Action

We tried to add some urgency to our call to action in the hopes that it would get more visitors to act. Didn’t happen.

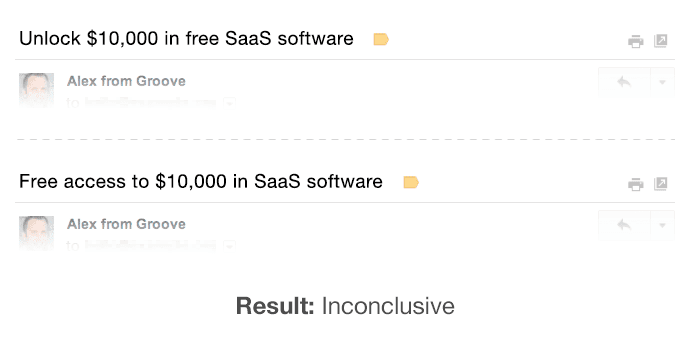

4) Email Subject Line

I have a friend who found that “free access” had a massive impact on her open rates. Not here.

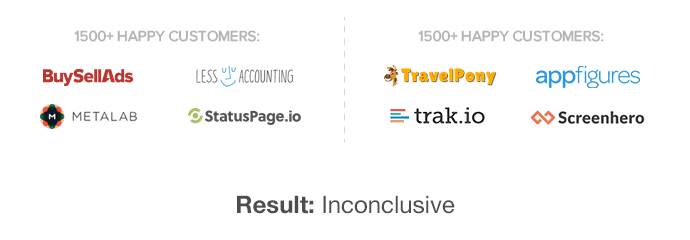

5) Customer Logos

We thought we’d try showing customer examples from a variety of industries in hopes of determining which logos were most relatable to visitors. No difference.

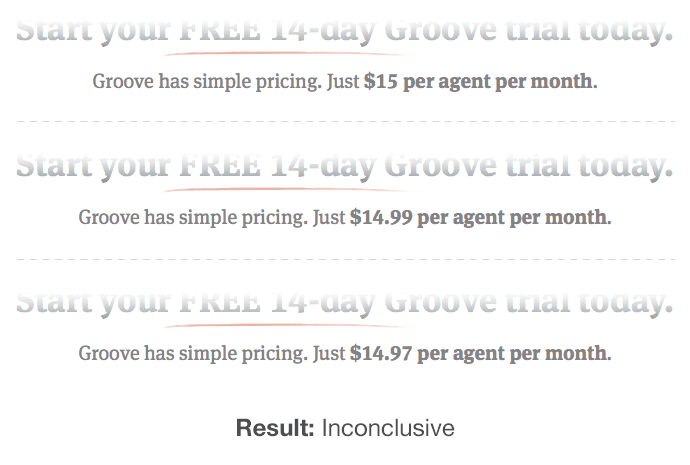

6) Pricing

Fierce forum debates have erupted over whether a price should end in .00, .99 or .97. In our homepage test, it didn’t matter.

How to Apply This to Your Business

The fact that these tests failed for us doesn’t mean that they won’t work for you.

It also doesn’t mean that they’ll fail for us in a week, or a month, or a year.

It just means that at the time we ran the test, it didn’t produce any statistically significant change — positive or negative — in our results.

I’ve said it before, but one of the most important lessons we’ve learned as a business is that big, long-term results don’t come from tactical tests; they come from doing the hard work of research, customer development and making big shifts in messaging, positioning and strategy.

Then A/B testing can help you optimize your already validated approach.

But my hope is that this post lifted the veil a bit from the standard “how we doubled our conversions with a simple test” case studies that so many of us have published.

Testing can deliver great results.

But be ready for a long, slow haul.